9. Tower Logging and Aggregation¶

Logging is a standalone feature introduced in Ansible Tower 3.1.0 that provides the capability to send detailed logs to several kinds of 3rd party external log aggregation services. Services connected to this data feed serve as a useful means in gaining insight into Tower usage or technical trends. The data can be used to analyze events in the infrastructure, monitor for anomalies, and correlate events from one service with events in another. The types of data that are most useful to Tower are job fact data, job events/job runs, activity stream data, and log messages. The data is sent in JSON format over a HTTP connection using minimal service-specific tweaks engineered in a custom handler or via an imported library.

9.1. Logging Aggregator Services¶

The logging aggregator service works with the following monitoring and data analysis systems:

9.1.1. Splunk¶

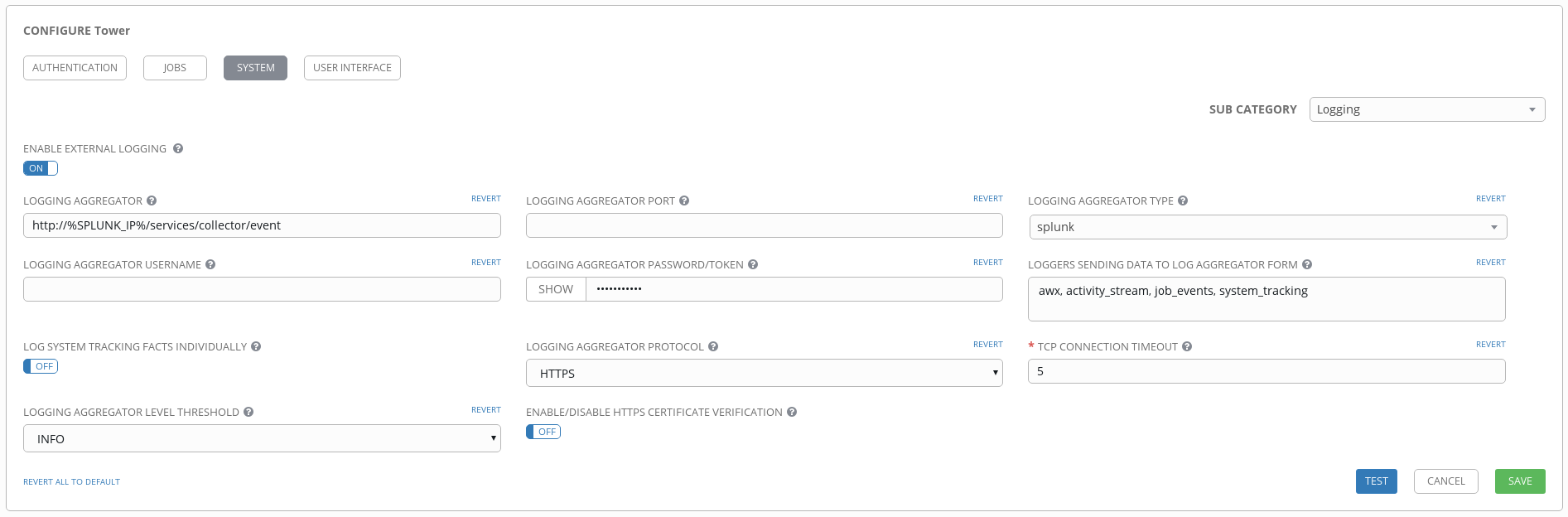

Ansible Tower’s Splunk logging integration uses the Splunk HTTP Collector. When configuring a SPLUNK logging aggregator, add the full URL to the HTTP Event Collector host, like in the following example:

https://yourtowerfqdn.com/api/v1/settings/logging

{

"LOG_AGGREGATOR_HOST": "https://yoursplunk:8088/services/collector/event",

"LOG_AGGREGATOR_PORT": null,

"LOG_AGGREGATOR_TYPE": "splunk",

"LOG_AGGREGATOR_USERNAME": "",

"LOG_AGGREGATOR_PASSWORD": "$encrypted$",

"LOG_AGGREGATOR_LOGGERS": [

"awx",

"activity_stream",

"job_events",

"system_tracking"

],

"LOG_AGGREGATOR_INDIVIDUAL_FACTS": false,

"LOG_AGGREGATOR_ENABLED": true,

"LOG_AGGREGATOR_TOWER_UUID": ""

}

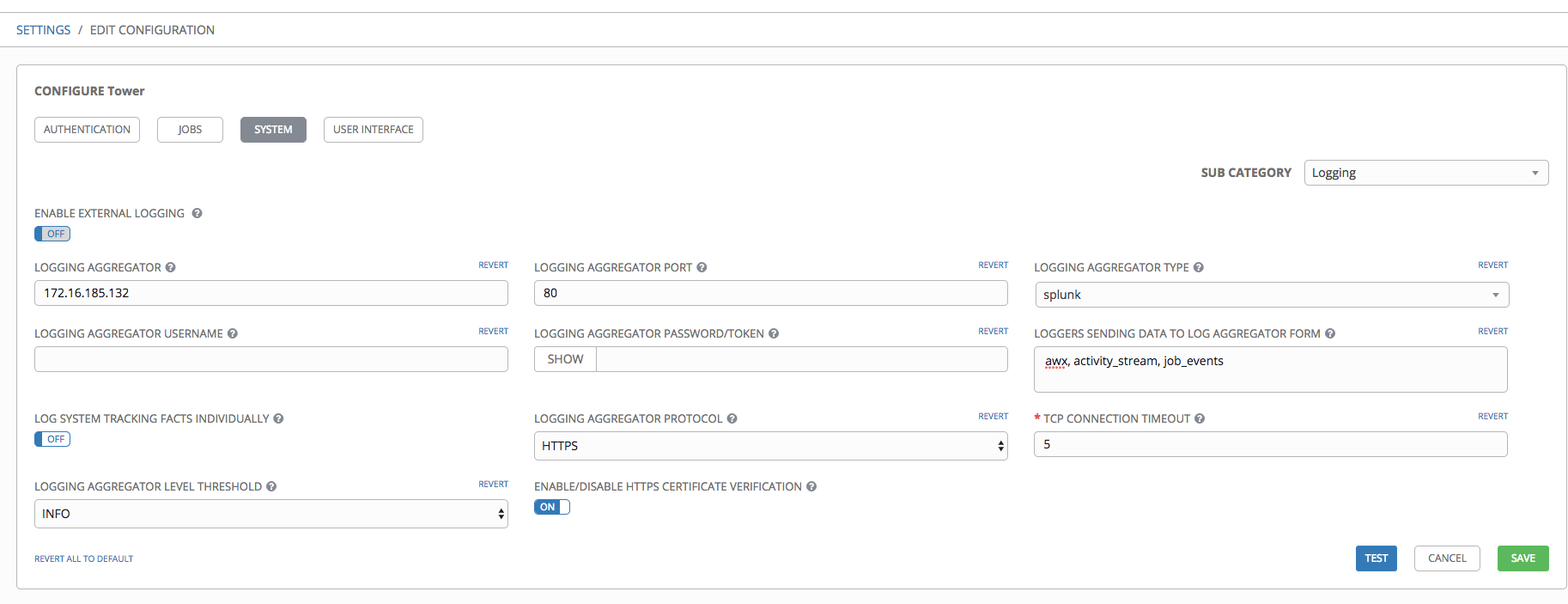

Splunk HTTP Event Collector listens on 8088 by default so it is necessary to provide the full HEC event URL (with port) in order for incoming requests to be processed successfully. These values are entered in the example below:

For further instructions on configuring the HTTP Event Collector, refer to the Splunk documentation.

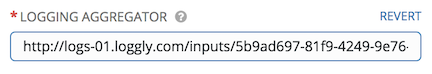

9.1.2. Loggly¶

To set up the sending of logs through Loggly’s HTTP endpoint, refer to https://www.loggly.com/docs/http-endpoint/. Loggly uses the URL convention described at http://logs-01.loggly.com/inputs/TOKEN/tag/http/, which is shown inputted in the Logging Aggregator field in the example below:

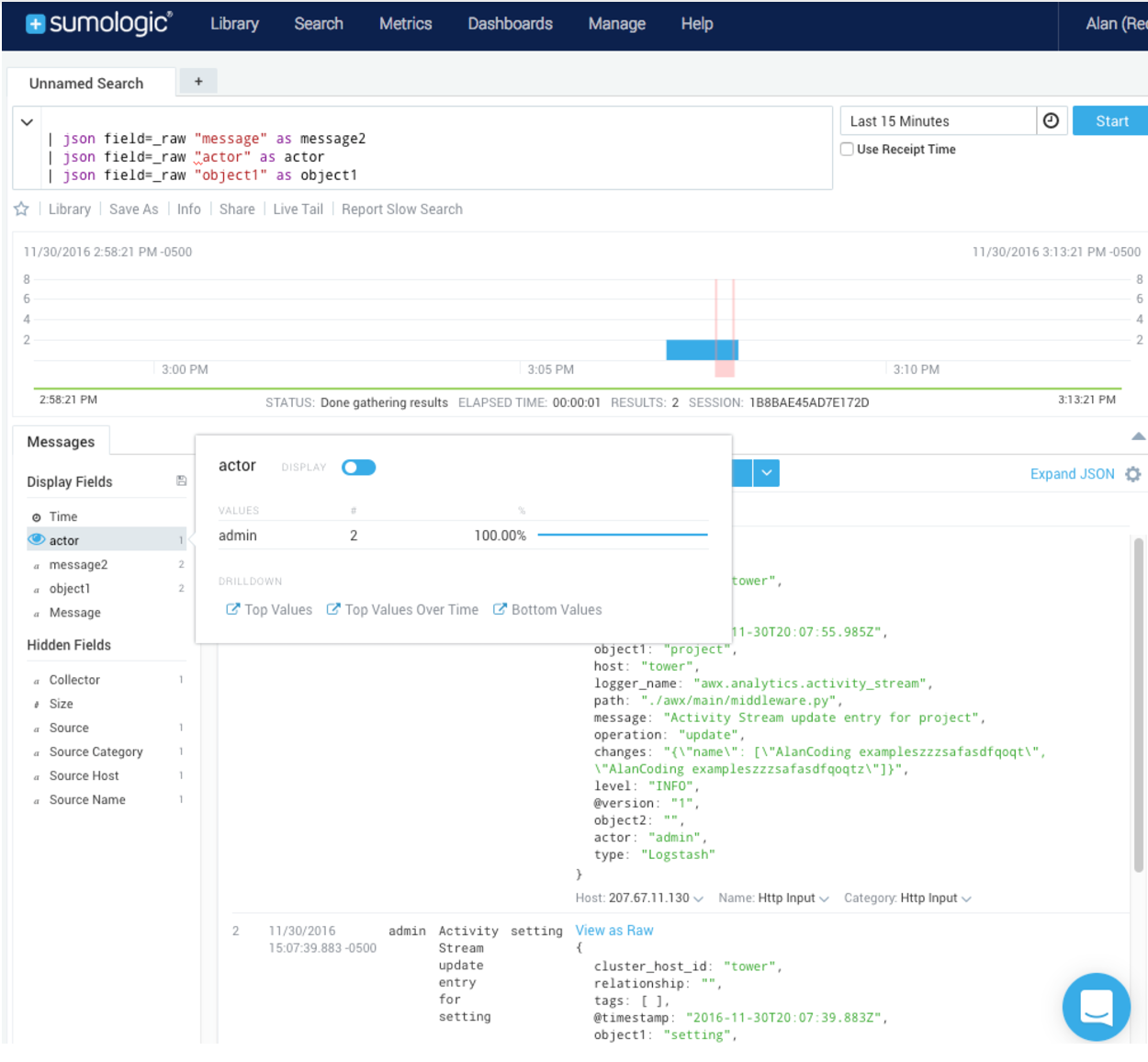

9.1.3. Sumologic¶

In Sumologic, create a search criteria containing the json files that provide the parameters used to collect the data you need.

9.1.4. Elastic stack (formerly ELK stack)¶

You can visualize information from the Tower logs in Kibana, captured via an Elastic stack consuming the logs. Ansible Tower provides compatibility with the logstash connector, and compatibility with the data model of elastic search. You can use the example settings, and either a library or provided examples to stand up containers that will demo the Elastic stack use end-to-end.

Tower uses logstash configuration to specify the source of the logs. Use this template to provide the input:

input {

http {

port => 8085

user => logger_username

password => "password"

}

}

Add this to your configuration file in order to process the message content:

filter {

json {

source => "message"

}

}

9.2. Set Up Logging with Tower¶

To set up logging to any of the aggregator types:

- From the Settings (

) Menu screen, click on Configure Tower.

) Menu screen, click on Configure Tower.

- Select the System tab.

- Select Logging from the Sub Category drop-down menu list.

- Set the configurable options from the fields provided:

- Enable External Logging: Click the toggle button to ON if you want to send logs to an external log aggregator.

- Logging Aggregator: Enter the hostname or IP address you want to send logs.

- Logging Aggregator Port: Specify the port for the aggregator if it requires one.

Note

When the connection type is HTTPS, you can enter the hostname as a URL with a port number and therefore, you are not required to enter the port again. But TCP and UDP connections are determined by the hostname and port number combination, rather than URL. So in the case of TCP/UDP connection, supply the port in the specified field. If instead a URL is entered in host field (Logging Aggregator field), its hostname portion will be extracted as the actual hostname.

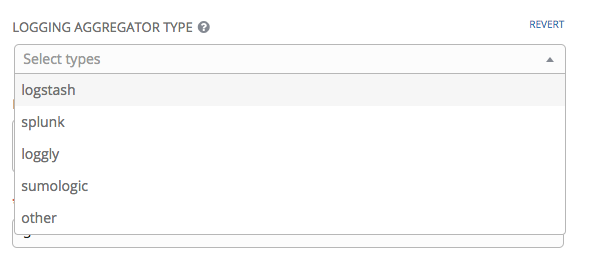

- Logging Aggregator Type: Click to select the aggregator service from the drop-down menu:

- Logging Aggregator Username: Enter the username of the logging aggregator if it requires it.

- Logging Aggregator Password/Token: Enter the password of the logging aggregator if it requires it.

- Loggers to Send Data to the Log Aggregator Form: All four types of data are pre-populated by default. Click the tooltip

icon next to the field for additional information on each data type. Delete the data types you do not want.

icon next to the field for additional information on each data type. Delete the data types you do not want.

- Log System Tracking Facts Individually: Click the tooltip

icon for additional information whether or not you want to turn it on, or leave it off by default.

icon for additional information whether or not you want to turn it on, or leave it off by default. - Logging Aggregator Protocol: Click to select a connection type (protocol) to communicate with the log aggregator. Subsequent options vary depending on the selected protocol.

- TCP Connection Timeout: Specify the connection timeout in seconds. This option is only applicable to HTTPS and TCP log aggregator protocols.

- Logging Aggeregator Level Threshold: Select the level of severity you want the log handler to report.

- Enable/Disable HTTPS Certificate Verification: Certificate verification is enabled by default for HTTPS log protocol. Click the toggle button to OFF if you do not want the log handler to verify the HTTPS certificate sent by the external log aggregator before establishing a connection.

- Review your entries for your chosen logging aggregation. Below is an example of one set up for Splunk:

- To verify if your configuration is set up correctly, click Test. This verifies the logging configuration by sending a test log message and checking the response code is OK.

- When done, click Save to apply the settings or Cancel to abandon the changes.