21. Jobs¶

A job is an instance of Tower launching an Ansible playbook against an inventory of hosts.

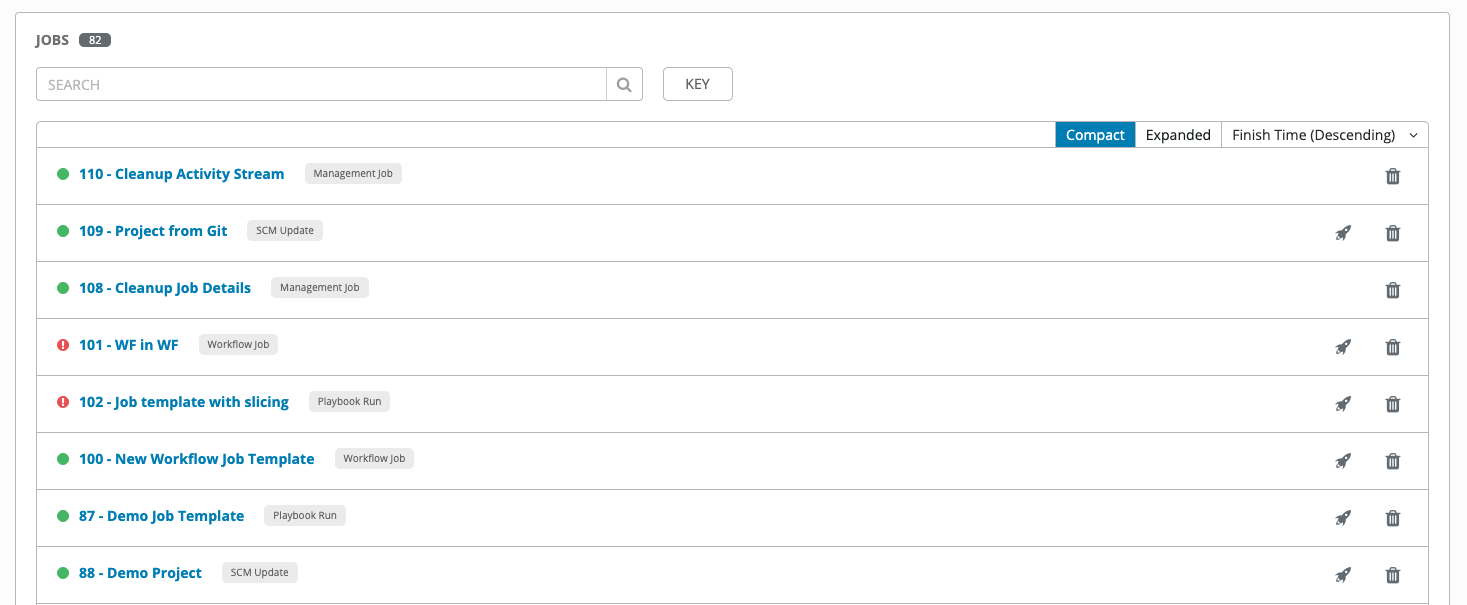

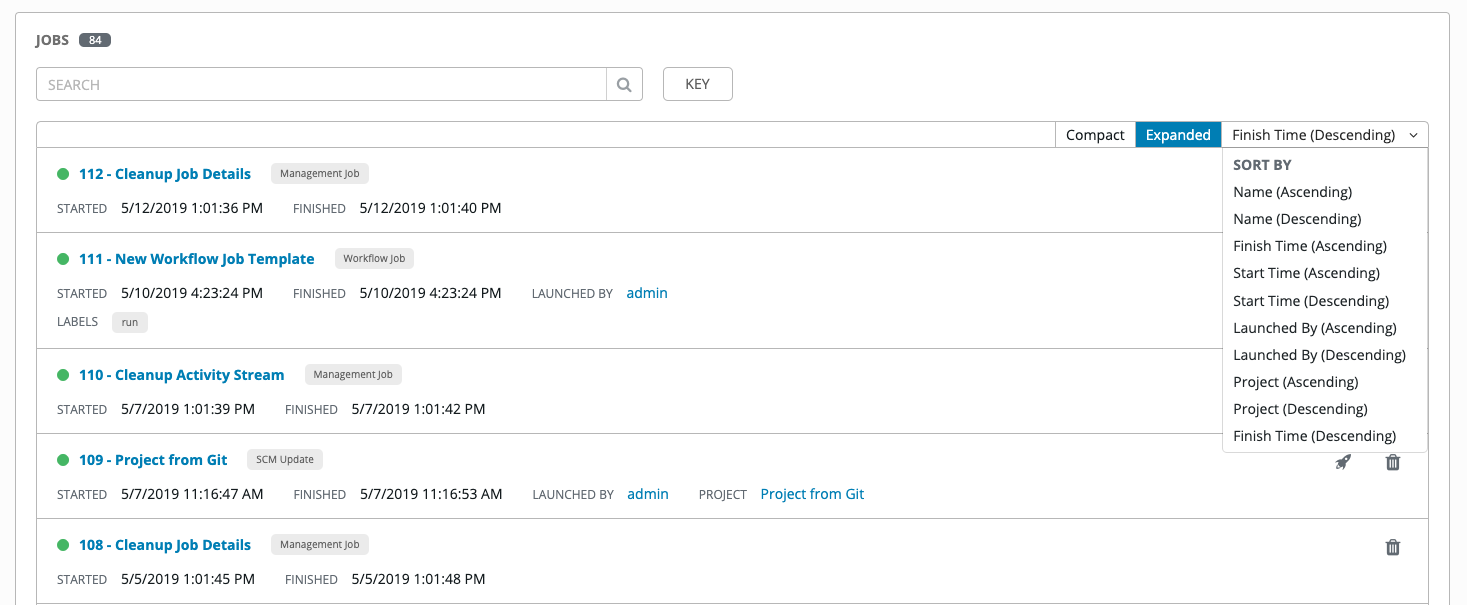

The Jobs link displays a list of jobs and their statuses–shown as completed successfully or failed, or as an active (running) job. The default view is collapsed (Compact) with the job ID, job name, and job type, but you can expand to see more information. You can sort this list by various criteria, and perform a search to filter the jobs of interest.

Actions you can take from this screen include viewing the details and standard output of a particular job, relaunching ( ) jobs, or removing (

) jobs, or removing ( ) jobs.

) jobs.

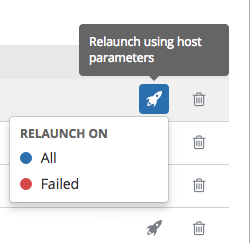

Starting with Ansible Tower 3.3, from the list view, you can re-launch the most recent job. You can re-run on all hosts in the specified inventory, even though some of them already had a successful run. This allows you to re-run the job without running the Playbook on them again. You can also re-run the job on all failed hosts. This will help lower the load on the Ansible Tower nodes as it does not need to process the successful hosts again.

The relaunch operation only applies to relaunches of playbook runs and does not apply to project/inventory updates, system jobs, workflow jobs, etc.

Selecting All relaunches all the hosts.

Selecting Failed relaunches all failed and unreachable hosts.

When it relaunches, you remain on the same page.

Use the Tower Search feature to look up jobs by various criteria. For details about using the Tower Search, refer to the Search chapter.

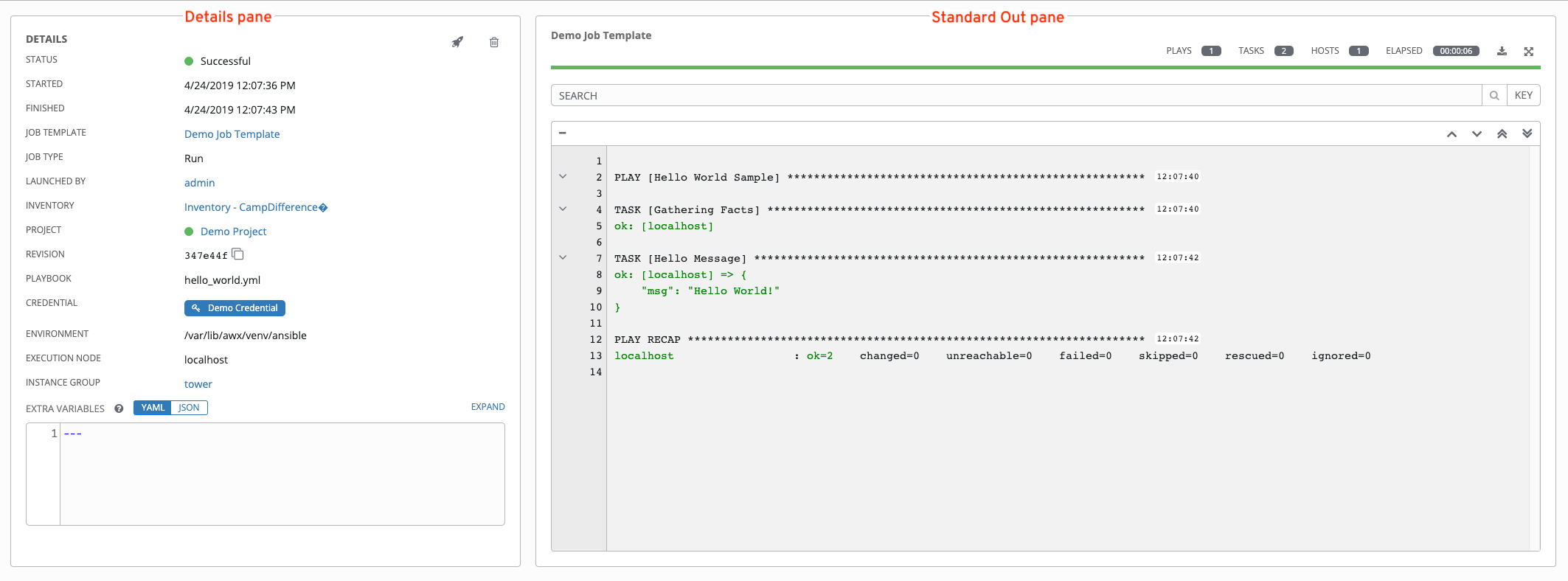

Clicking on any type of job takes you to the Job Details View for that job, which consists of two sections:

The Details pane provides information and status about the job

The Standard Out pane displays the job processes and output

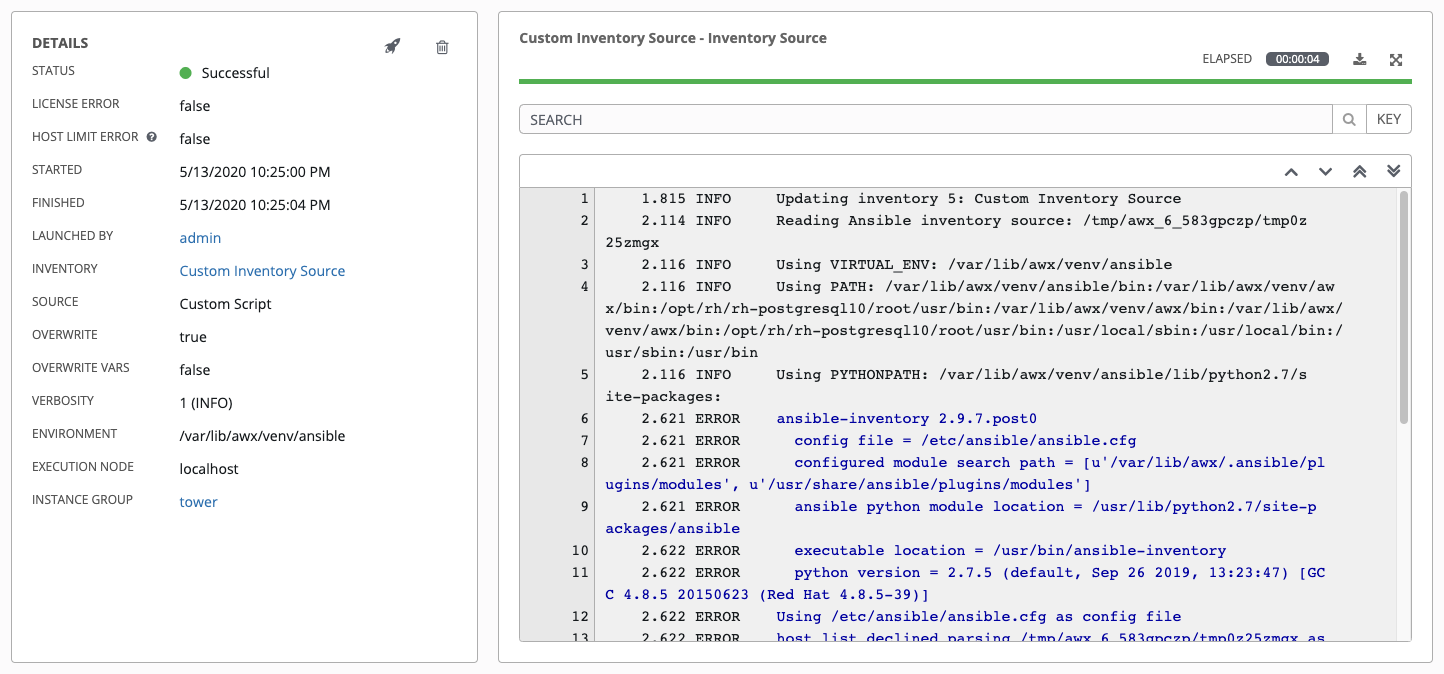

21.1. Job Details - Inventory Sync¶

Note

Starting in Ansible Tower 3.7, an inventory update can be performed while a related job is running. In cases where you have a big project (around 10 GB), disk space on /tmp may be an issue.

21.1.1. Details¶

The Details pane shows the basic status of the job and its start time. The icons at the top right corner of the Details pane allow you to relaunch ( ) or delete (

) or delete ( ) the job.

) the job.

The Details pane also provides details on the job execution:

Status: Can be any of the following:

Pending - The inventory sync has been created, but not queued or started yet. Any job, not just inventory source syncs, will stay in pending until it’s actually ready to be run by the system. Reasons for inventory source syncs not being ready include dependencies that are currently running (all dependencies must be completed before the next step can execute), or there is not enough capacity to run in the locations it is configured to.

Waiting - The inventory sync is in the queue waiting to be executed.

Running - The inventory sync is currently in progress.

Successful - The inventory sync job succeeded.

Failed - The inventory sync job failed.

License Error: Only shown for Inventory Sync jobs. If this is True, the hosts added by the inventory sync caused Tower to exceed the licensed number of managed hosts.

Host Limit Error: Denotes the job’s inventory belongs to an organization that has exceeded its limit of hosts as defined by the system administrator.

Started: The timestamp of when the job was initiated by Tower.

Finished: The timestamp of when the job was completed.

Launched By: The name of the user who launched the job.

Inventory: The name of the associated inventory group.

Source: The type of cloud inventory.

Overwrite: If True, any hosts and groups that were previously present on the external source but are now removed, are removed from the Tower inventory. Hosts and groups that were not managed by the inventory source are promoted to the next manually created group or if there is no manually created group to promote them into, they are left in the “all” default group for the inventory. If False, local child hosts and groups not found on the external source remain untouched by the inventory update process.

Overwrite Vars: If True, all variables for child groups and hosts are removed and replaced by those found on the external source. If False, a merge was performed, combining local variables with those found on the external source.

Verbosity: The level of output Ansible will produce for inventory source update jobs.

Environment: The virtual environment used.

Execution node: The node used to execute the job.

Instance Group: The name of the instance group used with this job (tower is the default instance group).

By clicking on these items, where appropriate, you can view the corresponding job templates, projects, and other Tower objects.

21.1.2. Standard Out¶

The Standard Out pane shows the full results of running the Inventory Sync playbook. This shows the same information you would see if you ran it through the Ansible command line, and can be useful for debugging. The icons at the top right corner of the Standard Out pane allow you to toggle the output as a main view ( ) or to download the output (

) or to download the output ( ).

).

Starting in Ansible Tower 3.3, the ANSIBLE_DISPLAY_ARGS_TO_STDOUT is set to False by default for all playbook runs. This matches Ansible’s default behavior. This causes Tower to no longer display task arguments in task headers in the Job Detail interface to avoid leaking certain sensitive module parameters to stdout. If you wish to restore the prior behavior (despite the security implications), you can set ANSIBLE_DISPLAY_ARGS_TO_STDOUT to True via the AWX_TASK_ENV configuration setting. For more details, refer to the ANSIBLE_DISPLAY_ARGS_TO_STDOUT.

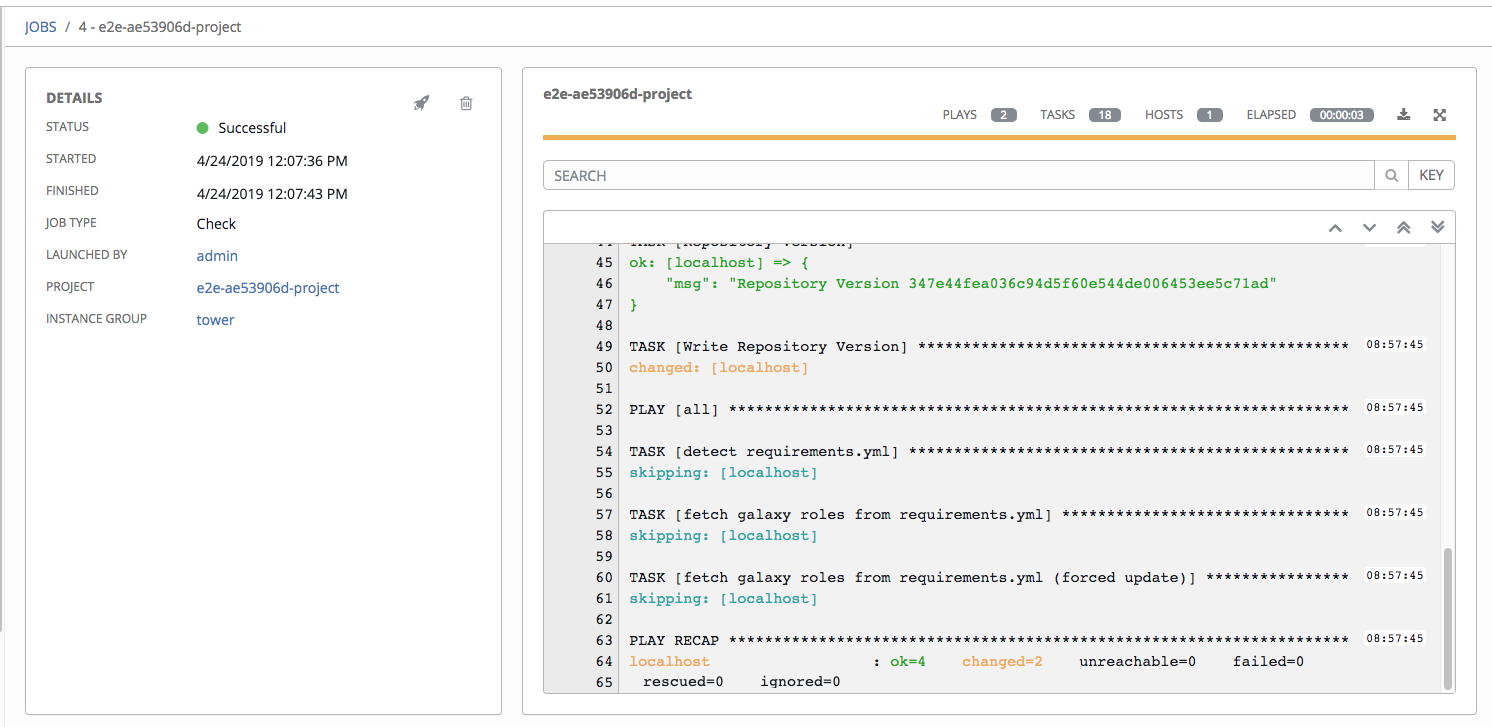

21.2. Job Details - SCM¶

21.2.1. Details¶

The Details pane shows the basic status of the job and its start time. The icons at the top right corner of the Details pane allow you to relaunch ( ) or delete (

) or delete ( ) the job.

) the job.

The Details pane provides details on the job execution:

Name: The name of the associated inventory group.

Status: Can be any of the following:

Pending - The SCM job has been created, but not queued or started yet. Any job, not just SCM jobs, will stay in pending until it’s actually ready to be run by the system. Reasons for SCM jobs not being ready include dependencies that are currently running (all dependencies must be completed before the next step can execute), or there is not enough capacity to run in the locations it is configured to.

Waiting - The SCM job is in the queue waiting to be executed.

Running - The SCM job is currently in progress.

Successful - The last SCM job succeeded.

Failed - The last SCM job failed.

Started: The timestamp of when the job was initiated by Tower.

Finished: The timestamp of when the job was completed.

Elapsed: The total time the job took.

Launch Type: Manual or Scheduled.

Project: The name of the project.

By clicking on these items, where appropriate, you can view the corresponding job templates, projects, and other Tower objects.

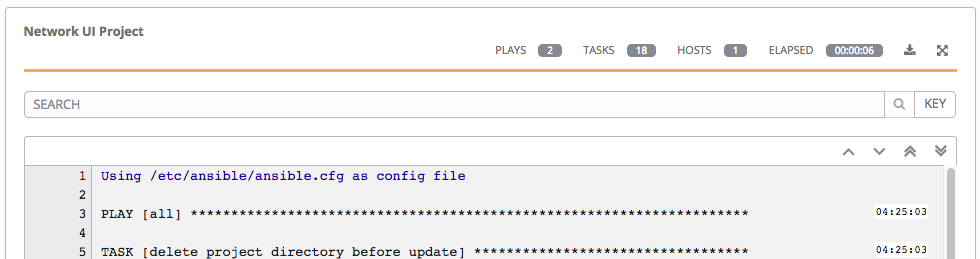

21.2.2. Standard Out¶

The Standard Out pane shows the full results of running the SCM Update. This shows the same information you would see if you ran it through the Ansible command line, and can be useful for debugging. The icons at the top right corner of the Standard Out pane allow you to toggle the output as a main view ( ) or to download the output (

) or to download the output ( ).

).

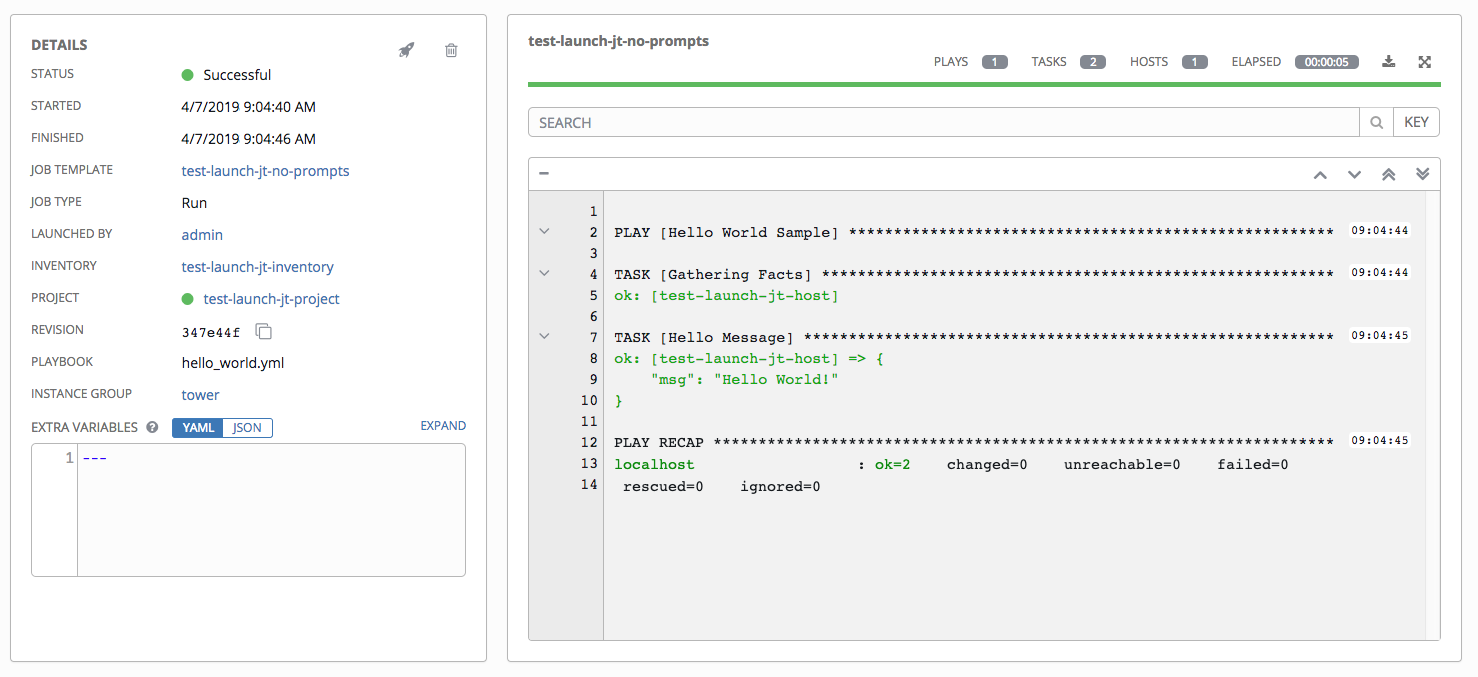

21.3. Job Details - Playbook Run¶

The Job Details View for a Playbook Run job is also accessible after launching a job from the Job Templates page.

21.3.1. Details¶

The Details pane shows the basic status of the job and its start time. The icons at the top right corner of the Details pane allow you to relaunch ( ) or delete (

) or delete ( ) the job.

) the job.

The Details pane provides details on the job execution:

Status: Can be any of the following:

Pending - The playbook run has been created, but not queued or started yet. Any job, not just playbook runs, will stay in pending until it’s actually ready to be run by the system. Reasons for playbook runs not being ready include dependencies that are currently running (all dependencies must be completed before the next step can execute), or there is not enough capacity to run in the locations it is configured to.

Waiting - The playbook run is in the queue waiting to be executed.

Running - The playbook run is currently in progress.

Successful - The last playbook run succeeded.

Failed - The last playbook run failed.

Template: The name of the job template from which this job was launched.

Started: The timestamp of when the job was initiated by Tower.

Finished: The timestamp of when the job was completed.

Elapsed: The total time the job took.

Launch By: The name of the user, job, or scheduled scan job which launched this job.

Inventory: The inventory selected to run this job against.

Machine Credential: The name of the credential used in this job.

Verbosity: The level of verbosity set when creating the job template.

Extra Variables: Any extra variables passed when creating the job template are displayed here.

By clicking on these items, where appropriate, you can view the corresponding job templates, projects, and other Tower objects.

21.3.2. Standard Out Pane¶

The Standard Out pane shows the full results of running the Ansible playbook. This shows the same information you would see if you ran it through the Ansible command line, and can be useful for debugging. You can view the event summary, host status, and the host events. The icons at the top right corner of the Standard Out pane allow you to toggle the output as a main view ( ) or to download the output (

) or to download the output ( ).

).

21.3.2.1. Events Summary¶

The events summary captures a tally of events that were run as part of this playbook:

the number of plays

the number of tasks

the number of hosts

the elapsed time to run the job template

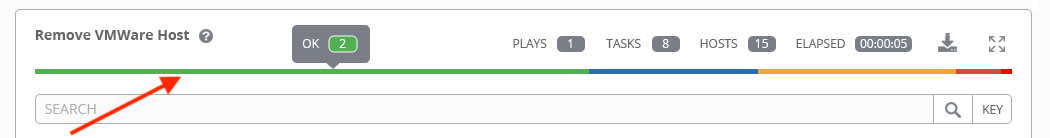

21.3.2.2. Host Status Bar¶

The host status bar runs across the top of the Standard Out pane. Hover over a section of the host status bar and the number of hosts associated with that particular status displays.

21.3.2.3. Search¶

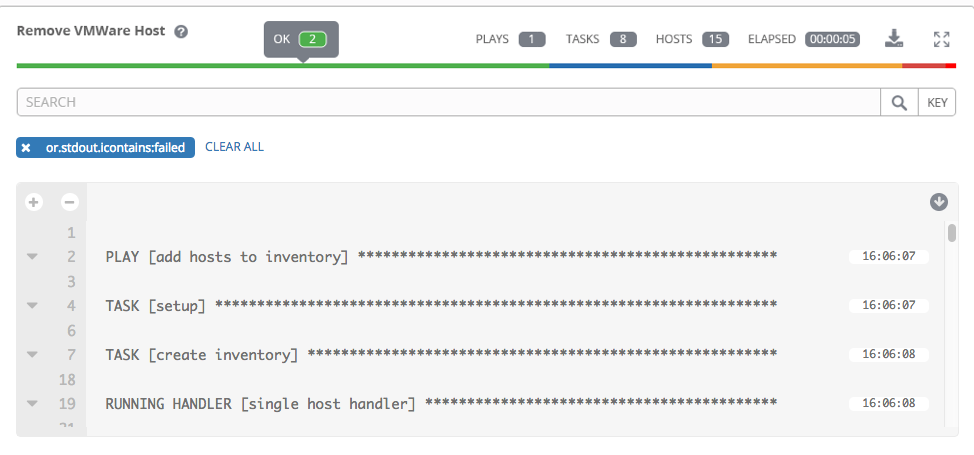

Use the Tower Search to look up specific events, hostnames, and their statuses. To filter only certain hosts with a particular status, specify one of the following valid statuses:

Changed: the playbook task actually executed. Since Ansible tasks should be written to be idempotent, tasks may exit successfully without executing anything on the host. In these cases, the task would return Ok, but not Changed.

Failed: the task failed. Further playbook execution was stopped for this host.

OK: the playbook task returned “Ok”.

Unreachable: the host was unreachable from the network or had another fatal error associated with it.

Skipped: the playbook task was skipped because no change was necessary for the host to reach the target state.

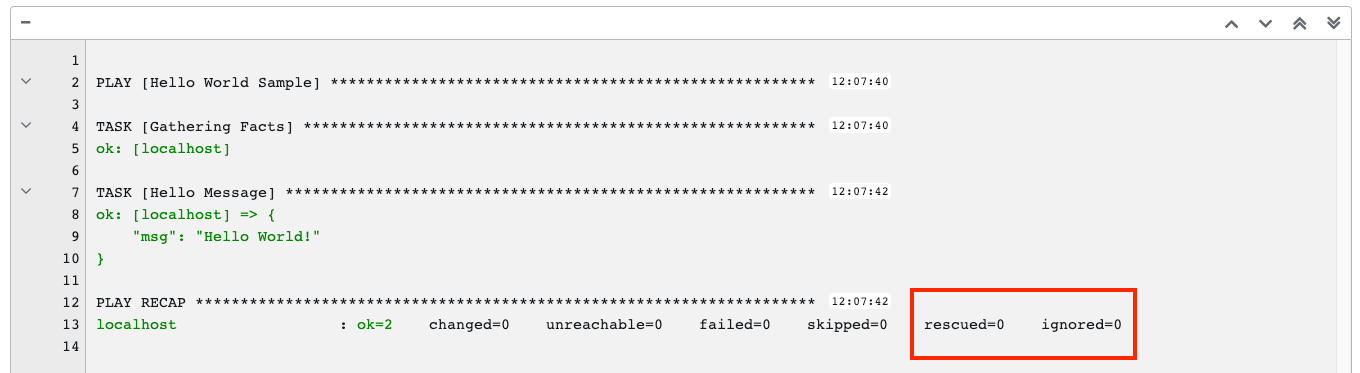

Rescued: introduced in Ansible 2.8, this shows the tasks that failed and then executes a rescue section.

Ignored: introduced in Ansible 2.8, this shows the tasks that failed and have

ignore_errors: yesconfigured.

These statuses also display at bottom of each Standard Out pane, in a group of “stats” called the Host Summary fields.

The example below shows a search with only failed hosts.

For more details about using the Tower Search, refer to the Search chapter.

21.3.2.4. Standard output view¶

The standard output view displays all the events that occur on a particular job. By default, all rows are expanded so that all the details are displayed. Use the collapse-all button (![]() ) to switch to a view that only contains the headers for plays and tasks. Click the (

) to switch to a view that only contains the headers for plays and tasks. Click the (![]() ) button to view all lines of the standard output.

) button to view all lines of the standard output.

Alternatively, you can display all the details of a specific play or task by clicking on the arrow icons next to them. Click an arrow from sideways to downward to expand the lines associated with that play or task. Click the arrow back to the sideways position to collapse and hide the lines.

Things to note when viewing details in the expand/collapse mode:

Each displayed line that is not collapsed has a corresponding line number and start time.

An expand/collapse icon is at the start of any play or task after the play or task has completed.

If querying for a particular play or task, it will appear collapsed at the end of its completed process.

In some cases, an error message will appear, stating that the output may be too large to display. This occurs when there are more than 4000 events. Use the search and filter for specific events to bypass the error.

Hover over an event line in the Standard Out view, a tooltip displays above that line, giving the total hosts affected by this task and an option to view further details about the breakdown of their statuses.

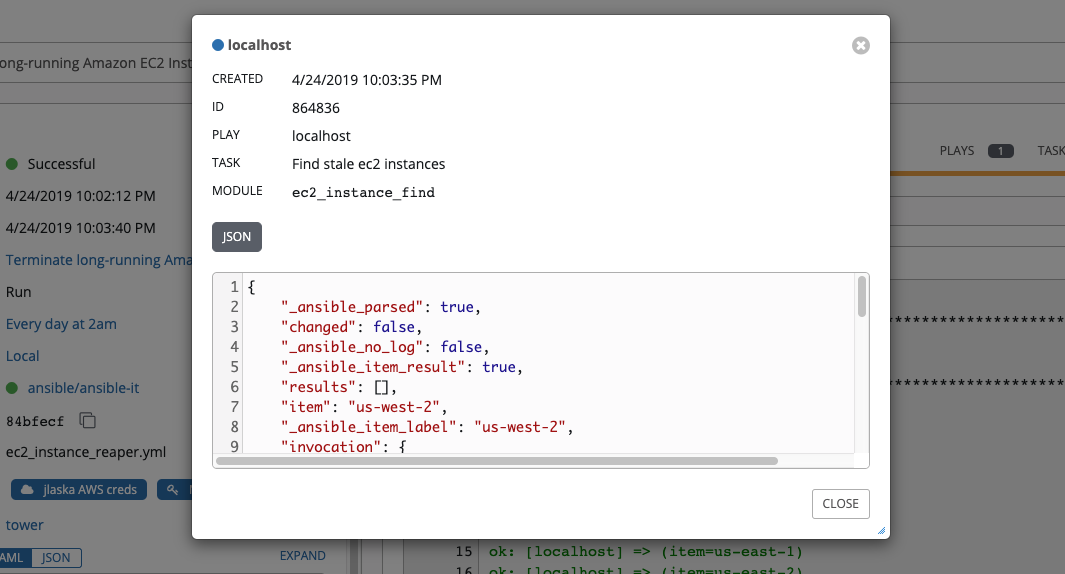

Click on a line of an event from the Standard Out pane and a Host Events dialog displays in a separate window. This window shows the host that was affected by that particular event.

Note

Prior to 3.7, Ansible Tower has a limitation on the maximum number of events it can record as a result of playbook runs (around 2 billion events). The upgrade to 3.7 involves progressively migrating all historical playbook output to a new expanded data format that removes this limitation. This migration process is gradual, and happens automatically in the background after installation is complete. Installations with very large amounts of historical job output (tens, or hundreds of GB of output) may notice missing job output until migration is complete. Most recent data will show up at the top of the output, followed by older events. Migrating jobs with a large amount of events may take longer than jobs with a smaller amount.

21.3.2.5. Host Events¶

The Host Events dialog shows information about the host affected by the selected event and its associated play and task:

the Host

the Status

a unique ID

a Created time stamp

the name of the Play

the name of the Task

if applicable, the Ansible Module for the task, and any arguments for that module

the Standard Out of the task

To view the results in JSON format, click on the JSON tab.

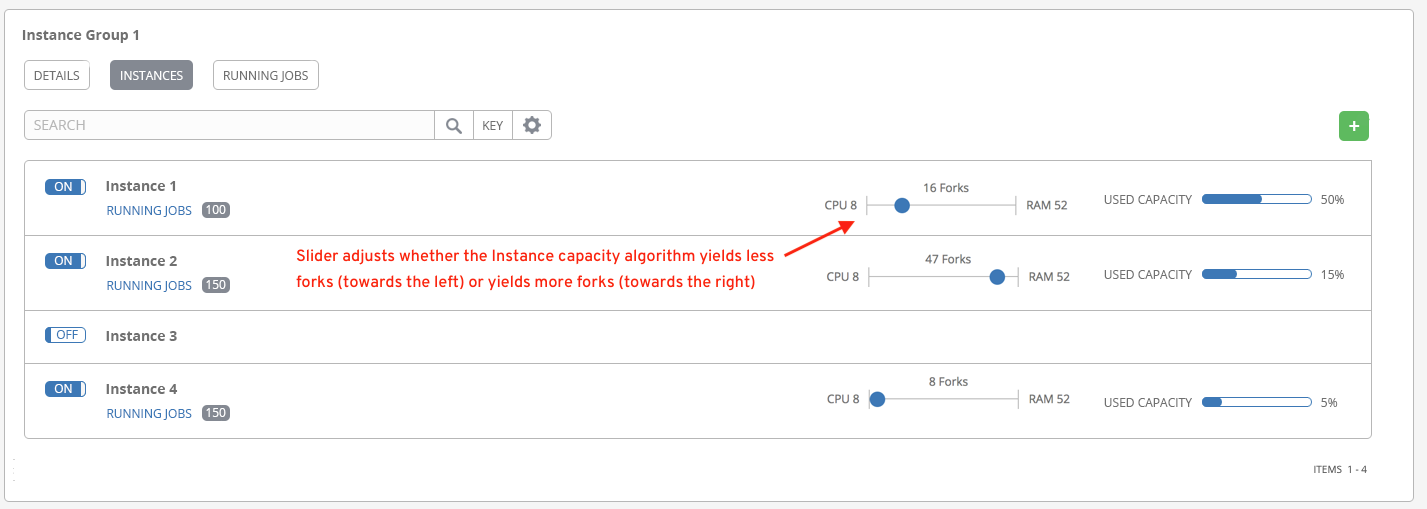

21.4. Ansible Tower Capacity Determination and Job Impact¶

This section describes how to determine capacity for instance groups and its impact to your jobs. For container groups, see Container capacity limits in the Ansible Tower Administration Guide.

The Ansible Tower capacity system determines how many jobs can run on an instance given the amount of resources available to the instance and the size of the jobs that are running (referred to as Impact). The algorithm used to determine this is based entirely on two things:

How much memory is available to the system (

mem_capacity)How much CPU is available to the system (

cpu_capacity)

Capacity also impacts Instance Groups. Since Groups are made up of instances, likewise, instances can be assigned to multiple groups. This means that impact to one instance can potentially affect the overall capacity of other Groups.

Instance Groups (not instances themselves) can be assigned to be used by jobs at various levels (see Clustering). When the Task Manager is preparing its graph to determine which group a job will run on, it will commit the capacity of an Instance Group to a job that hasn’t or isn’t ready to start yet.

Finally, in smaller configurations, if only one instance is available for a job to run, the Task Manager will allow that job to run on the instance even if it pushes the instance over capacity. This guarantees that jobs themselves won’t get stuck as a result of an under-provisioned system.

Therefore, Capacity and Impact is not a zero-sum system relative to jobs and instances/Instance Groups.

For information on sliced jobs and their impact to capacity, see Job slice execution behavior.

21.4.1. Resource determination for capacity algorithm¶

The capacity algorithms are defined in order to determine how many forks a system is capable of running simultaneously. This controls how many systems Ansible itself will communicate with simultaneously. Increasing the number of forks a Tower system is running will, in general, allow jobs to run faster by performing more work in parallel. The trade-off is that this will increase the load on the system, which could cause work to slow down overall.

Tower can operate in two modes when determining capacity. mem_capacity (the default) will allow you to over-commit CPU resources while protecting the system from running out of memory. If most of your work is not CPU-bound, then selecting this mode will maximize the number of forks.

21.4.1.1. Memory relative capacity¶

mem_capacity is calculated relative to the amount of memory needed per fork. Taking into account the overhead for Tower’s internal components, this comes out to be about 100MB per fork. When considering the amount of memory available to Ansible jobs, the capacity algorithm will reserve 2GB of memory to account for the presence of other Tower services. The algorithm formula for this is:

(mem - 2048) / mem_per_fork

As an example:

(4096 - 2048) / 100 == ~20

Therefore, a system with 4GB of memory would be capable of running 20 forks. The value mem_per_fork can be controlled by setting the Tower settings value (or environment variable) SYSTEM_TASK_FORKS_MEM, which defaults to 100.

21.4.1.2. CPU relative capacity¶

Often, Ansible workloads can be fairly CPU-bound. In these cases, sometimes reducing the simultaneous workload allows more tasks to run faster and reduces the average time-to-completion of those jobs.

Just as the Tower mem_capacity algorithm uses the amount of memory need per fork, the cpu_capacity algorithm looks at the amount of CPU resources is needed per fork. The baseline value for this is 4 forks per core. The algorithm formula for this is:

cpus * fork_per_cpu

For example, a 4-core system:

4 * 4 == 16

The value fork_per_cpu can be controlled by setting the Tower settings value (or environment variable) SYSTEM_TASK_FORKS_CPU which defaults to 4.

21.4.2. Capacity job impacts¶

When selecting the capacity, it’s important to understand how each job type affects capacity.

It’s helpful to understand what forks mean to Ansible: https://www.ansible.com/blog/ansible-performance-tuning (see the section on “Know Your Forks”).

The default forks value for Ansible is 5. However, if Tower knows that you’re running against fewer systems than that, then the actual concurrency value will be lower.

When a job is run, Tower will add 1 to the number of forks selected to compensate for the Ansible parent process. So if you are running a playbook against 5 systems with a forks value of 5, then the actual forks value from the perspective of Job Impact will be 6.

21.4.2.1. Impact of job types in Tower¶

Jobs and Ad-hoc jobs follow the above model, forks + 1. If you set a fork value on your job template, your job capacity value will be the minimum of the forks value supplied, and the number of hosts that you have, plus one. The plus one is to account for the parent Ansible process.

Instance capacity determines which jobs get assigned to any specific instance. Jobs and ad hoc commands use more capacity if they have a higher forks value.

Other job types have a fixed impact:

Inventory Updates: 1

Project Updates: 1

System Jobs: 5

If you don’t set a forks value on your job template, your job will use Ansible’s default forks value of five. Even though Ansible defaults to five forks, it will use fewer if your job has fewer than five hosts. In general, setting a forks value higher than what the system is capable of could cause trouble by running out of memory or over-committing CPU. So, the job template fork values that you use should fit on the system. If you have playbooks using 1000 forks but none of your systems individually has that much capacity, then your systems are undersized and at risk of performance or resource issues.

21.4.2.2. Selecting the right capacity¶

Selecting a capacity out of the CPU-bound or the memory-bound capacity limits is, in essence, selecting between the minimum or maximum number of forks. In the above examples, the CPU capacity would allow a maximum of 16 forks while the memory capacity would allow 20. For some systems, the disparity between these can be large and often times you may want to have a balance between these two.

The instance field capacity_adjustment allows you to select how much of one or the other you want to consider. It is represented as a value between 0.0 and 1.0. If set to a value of 1.0, then the largest value will be used. The above example involves memory capacity, so a value of 20 forks would be selected. If set to a value of 0.0 then the smallest value will be used. A value of 0.5 would be a 50/50 balance between the two algorithms which would be 18:

16 + (20 - 16) * 0.5 == 18

To view or edit the capacity in the Tower user interface, select the Instances tab of the Instance Group.

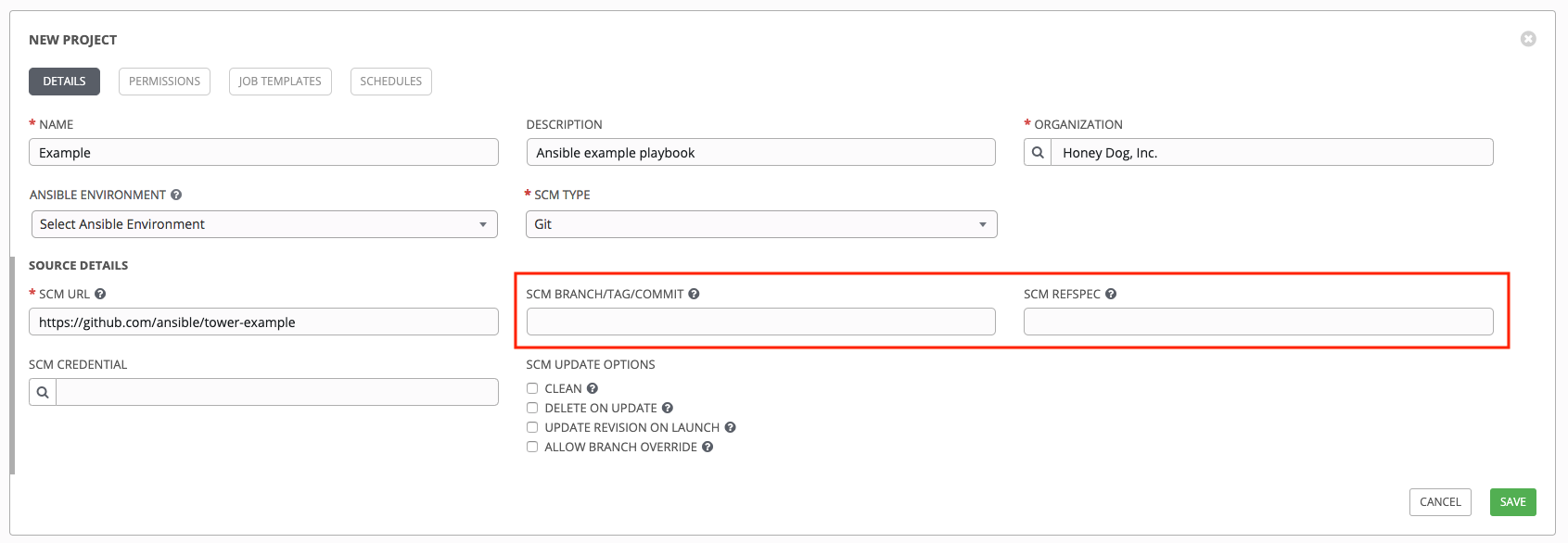

21.5. Job branch overriding¶

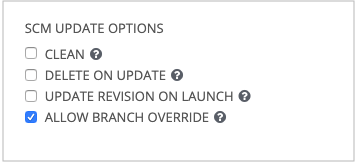

Projects specify the branch, tag, or reference to use from source control in the scm_branch field. These are represented by the values specified in the Project Details fields as shown.

Projects have the option to “Allow Branch Override”. When checked, project admins can delegate branch selection to the job templates that use that project (requiring only project use_role).

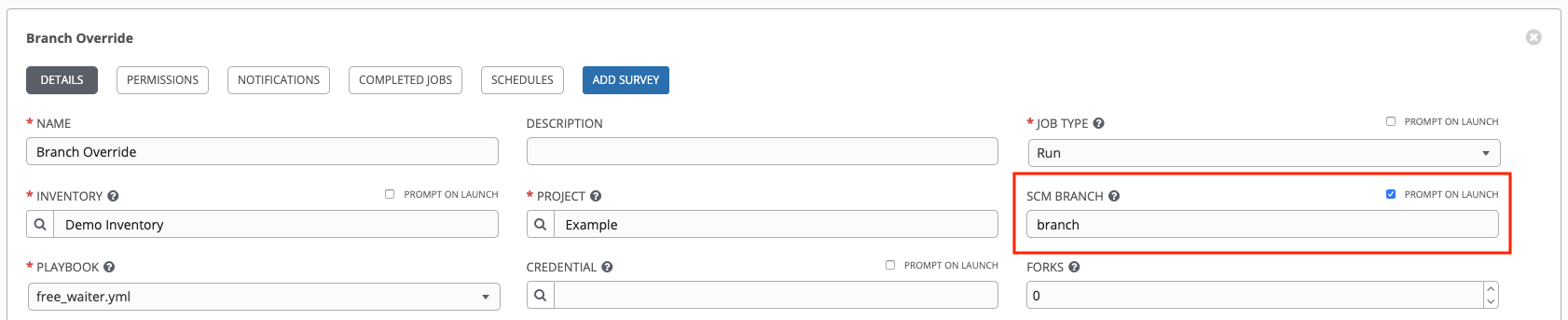

Admins of job templates can further delegate that ability to users executing the job template (requiring only job template execute_role) by checking the Prompt on Launch box next to the SCM Branch field of the job template.

21.5.1. Source tree copy behavior¶

Every job run has its own private data directory. This directory contains a copy of the project source tree for the given

scm_branch the job is running. Jobs are free to make changes to the project folder and make use of those changes while it is still running. This folder is temporary and is cleaned up at the end of the job run.

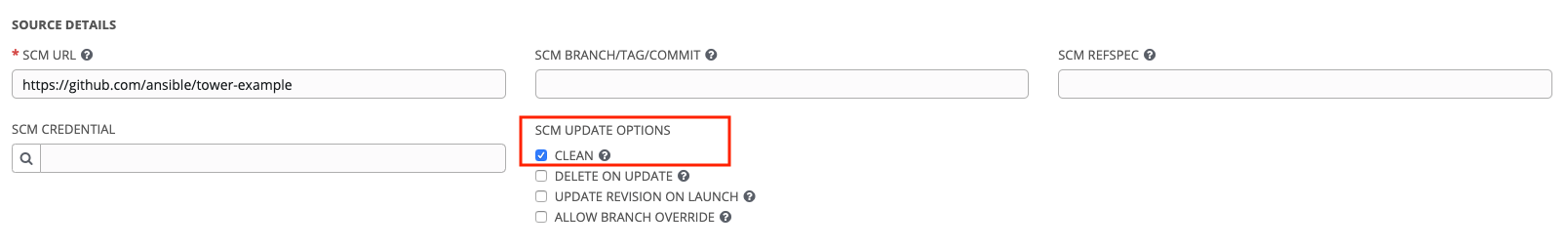

If Clean is checked, Tower discards modified files in its local copy of the repository through use of the force parameter in its respective Ansible modules pertaining to git, Subversion, and Mercurial.

21.5.2. Project revision behavior¶

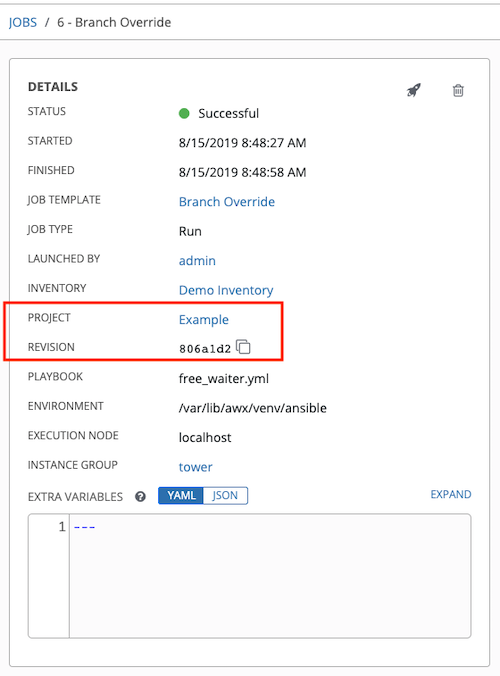

Typically, during a project update, the revision of the default branch (specified in the SCM Branch field of the project) is stored when updated, and jobs using that project will employ this revision. Providing a non-default SCM Branch (not a commit hash or tag) in a job, the newest revision is pulled from the source control remote immediately before the job starts. This revision is shown in the Revision field of the job and its respective project update.

Consequently, offline job runs are impossible for non-default branches. To be sure that a job is running a static version from source control, use tags or commit hashes. Project updates do not save the revision of all branches, only the project default branch.

The SCM Branch field is not validated, so the project must update to assure it is valid. If this field is provided or prompted for, the Playbook field of job templates will not be validated, and you will have to launch the job template in order to verify presence of the expected playbook.

21.5.3. Git Refspec¶

The SCM Refspec field specifies which extra references the update should download from the remote. Examples are:

refs/*:refs/remotes/origin/*: fetches all references, including remotes of the remote

refs/pull/*:refs/remotes/origin/pull/*(GitHub-specific): fetches all refs for all pull requests

refs/pull/62/head:refs/remotes/origin/pull/62/head: fetches the ref for that one GitHub pull request

For large projects, you should consider performance impact when using the 1st or 2nd examples here.

The SCM Refspec parameter affects the availability of the project branch, and can allow access to references not otherwise available. The examples above allow the user to supply a pull request from the SCM Branch, which would not be possible without the SCM Refspec field.

The Ansible git module fetches refs/heads/* by default. This means that a project’s branches and tags (and commit hashes therein) can be used as the SCM Branch if SCM Refspec is blank. The value specified in the SCM Refspec field affects which SCM Branch fields can be used as overrides. Project updates (of any type) will perform an extra git fetch command to pull that refspec from the remote.

For example: You could set up a project that allows branch override with the 1st or 2nd refspec example –> Use this in a job template that prompts for the SCM Branch –> A client could launch the job template when a new pull request is created, providing the branch pull/N/head –> The job template would run against the provided GitGub pull request reference.

For more information on the Ansible git module, see https://docs.ansible.com/ansible/latest/modules/git_module.html.